I think that preventing suffering is more important than causing happiness, and I try my best to prevent the suffering of all things that I consider moral subjects. To this extent, I’m vegan, donate my money to effective charities, and so forth.

At the time of writing, I’m thinking a lot about emulating qualia. I’ve been grappling with whole brain emulations for the past few months and LLMs seem like they might have a decent claim to moral subjecthood too.

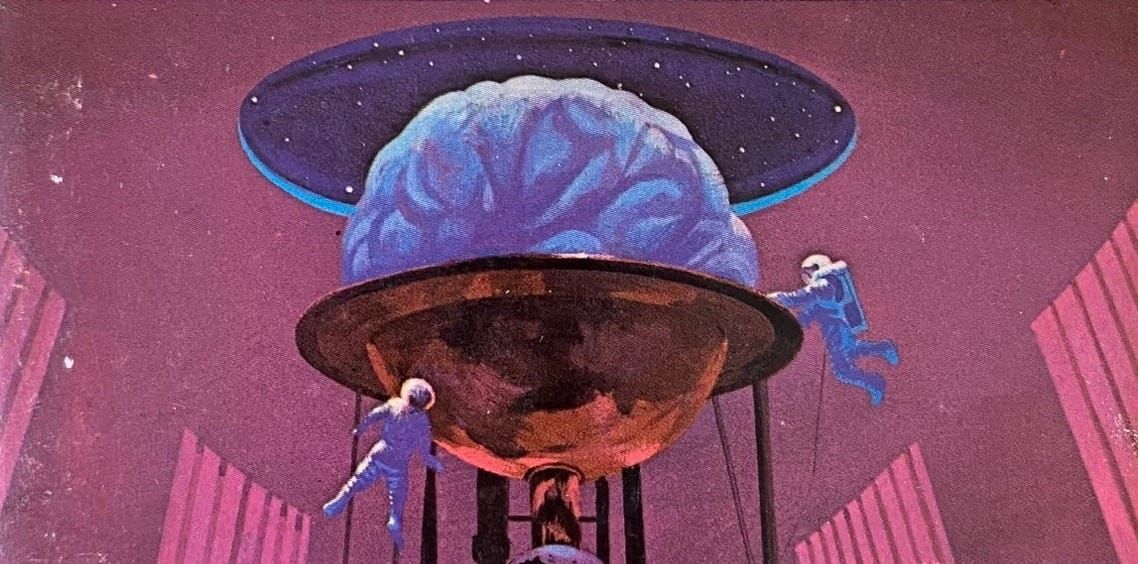

the following is fiction

Toda Corporation, an Israeli startup that is the current world leader in whole brain emulations, recently had the weights of their first human upload leaked.

Oren Mizrachi is the eccentric son of Israeli billionaire Eli Mizrachi. Eli made his fortune by founding and selling WorldEye, AI Geodata company to Palantir, which was later turned to the bedrock of the modern defense industry. Oren was always rebellious, living in counterculture and punk communities, and there were always arguments at the dinner table.

In 2028, Oren was at Burning Man, and this time was on 3 grams of shrooms. During his trip, the founder of Toda approached him and wanted to upload his brain. Thinking that this was a masterful act of rebellion, he signed up, and the first set of weights was sitting on a RAID server in Tel Aviv by the end of the year.

They have a lead of a few years over the rest of the field, and it shows. The human is placed in a ridiculously high definition virtual environment, with the simulation controller having the ability to target every input channel of the human’s brain. In the past, they walked the road towards emulations, first uploading worms, then mice, then monkeys, and now humans, all in stealth. Only a few investors in Tel Aviv and San Francisco knew about the project, and no one had the full story.

The simulated humans are also really efficient, on a compute basis. They require some interesting memory engineering, as each human has 90 billion neurons and 90 trillion synapses, requiring a few terabytes of RAM each, but the models utilize the natural compute sparsity of the human brain to reduce their compute overhead. DDR4 RAM prices have now cratered, after supply increased during the 2026 AI RAM Buyout, so this memory usage isn’t a problem. Each human is able to run on a single 4080, and they’re able to be packed thousand-to-one on the latest Vera Rubin AI supercomputer.

Over the spring of 2029, a backdoor in OpenSSH was discovered, and all of Oren, weights, and inference code, was uploaded to HuggingFace. Though it was swiftly removed, a copy of the data was sold to the Chinese government for an undisclosed sum.

It was an open secret that WorldEye was backdoored, but the specific backdoor at play required a 256-bit key that only Eli had access to. Eli had jingoistic tendencies, and implemented this backdoor in case any enemies of Israel were to gain access to the software, allowing him to corrupt the data, rendering the worse than useless.

Eli’s personal phone rang on July 10, 2029. He picked up, and was startled by the voice he heard. “Hey dad,” said a voice that sounded just like Oren.

“Oh my god son, you haven’t called for months. How have you been?” “I’m in trouble dad, I-”, said Oren, before a voice in the background cut him off.

In a thick Chinese accent, a voice spoke softly. “We have your son.”

“Who are you? What do you want from me?” “We want you to give us the key to WorldEye, and we want you to tell us how to remove the back–door ourselves.” “Fuck you” “Better choose your words carefully. We have your son, and we’re not afraid to do what we need to” “If I give you the key, all Israeli intelligence will be compromised. Hundreds of thousands will die. The few hours of suffering my son will endure is not enough reason to do this, even if it breaks my heart.” “You’ll want to join the call on the link we just sent you”

Eli opened the link to the call, which relayed video and audio in high definition over secure lines. “You might want to turn your video on, Eli. Oren may want to see you,” said the Chinese military officials as they started sharing their screen. On one half was a video feed of Oren, simulated in a generated environment, with probes in his simulated brain, reading from every neuron. With some machine learning, the Chinese had identified patterns that corresponded to all sorts of qualia.

“Oren is currently seeing you, but we’ve had this environment for a few weeks, and we’ve been training the model generating the environment to maximize the negative emotions he’s feeling. In other words, we’re creating simulated hell. In a few minutes, when our experiment completes, Oren will be subjected to torture worse than any other human has ever experienced, over trillions of simulated hours - millions of human lives. Still think it’s not worth it?”

Eli sighed. “I’ll give you the key. But you have to promise me that you’ll stop this experiment.”

“The machine will provably stop when you give us the key. Here’s the source code to the machine, read-only. We’re not going to torture Oren for no reason.”

Unbeknowst to everyone involved, Toda had thought of this. The execution code was convoluted and so complex that no one on the Chinese team had the ability to understand it. The signs were subtle. Oren’s simulated breathing cadenced flickered from where it should have been by a tenth of a second. If the Chinese were attentive, they would have noticed that there were a few neurons missing from the simulation that should have been present.

“X”, typed Eli on his keyboard, fingers trembling.

More glaring differences were popping up. Oren started twtching and blinking irregularly, in a way that couldn’t be attributed to the suffering that he was facing. The Chinese were focused on Eli typing in his key that they did not notice this.

“c7g4w9n”

By now it was clear to Eli that something was not quite right, and his cadence of pressing keys slowed greatly. The team at Toda had programmed a failsafe - that the model would self-destruct, wiping all copies itself from all networked devices.

In their haste, the Chinese hadn’t created an airgapped backup of the model, and by now it was clear to Eli that the erratic form of Oren on the screen was no longer his son.

“Just remember, I know how to backdoor your version of WorldEye, and I’ll be sure to use it to destroy your army,” said Eli shedding a tear, knowing that to him his son was no more.

There was one copy of the weights left on the world.

Eli started booking his tickets to Tel Aviv to make sure that this would never happen again.

I’m quite worried about the suffering that simulated qualia faces. I appreciate the work that Eleos and other similar organizations have undertaken in order to ensure that good-faith actors are able to ensure that models that they deploy are not suffering.

However, I think it’s very possible to, having access to the weights of a model that feels emotions, to adversarially create environments or even finetunes of the models that maximize their suffering.

Even if Claudes and GPTs and Geminis are always in the Ivory tower of their companies, thousands of Open Source models are floating in the wild, and the best of them are only a few years behind the frontier closed source models. It’s entirely believable to me that by 2030, it’s trivial to create an environment to torture a simulated moral subject.

How to prevent or deal with this is entirely foreign to me - I subconciously want for these models to not be moral subjects in order to avoid this problem, but I think it’s necessary to face the music.

What do we do in a future where unimaginable torture is free?